DataStructure Sort

Lower Bound of Time Complexity

Inversion

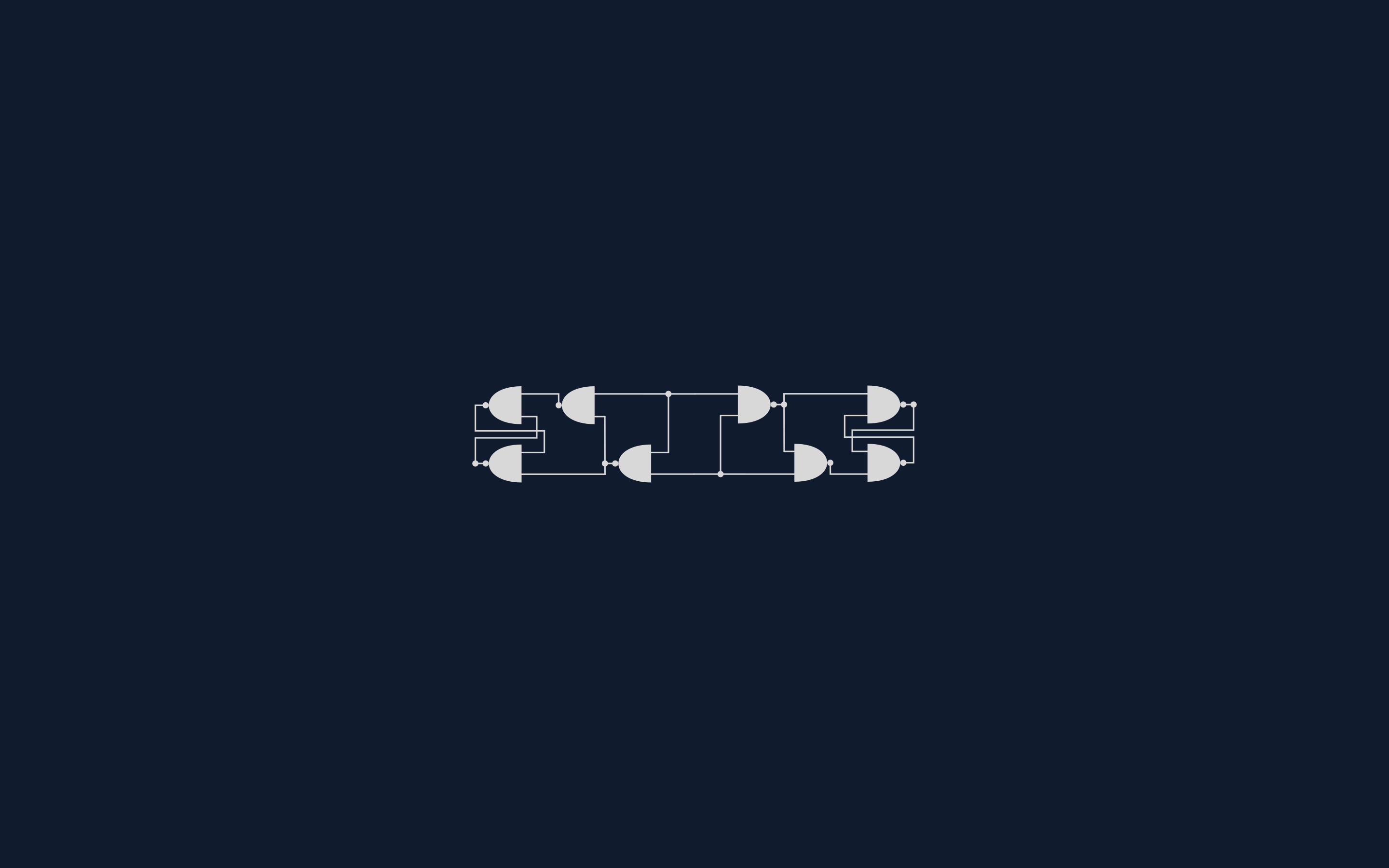

For index i and j, if A[i] > A[j] then (i, j) is an inversion.

For insertion sort:

Theorem A: For N different elements, the average number of inversions are

Theorem B: For any algorithm which only swaps the adjacent 2 elements, the average time complexity is:

From B we know that if we want to make our algorithms more efficient, we should eliminate more than one inversions each time! One solution is to swap 2 elements as far as possible.

Shell Sort

Define a decreasing sequence. This sequence is used to separate the whole sequence. The latter ones will not spoil the result of the former ones.

However, the worst case of Shell Sort will be:

From some of the worst cases we know that if the increments are not coprime to each other, the sequence may not be effective.

There are, of course, some other incremental sequences:

- Hibbard:

The worst case: - Sedgewick

Heap Sort

This is based on Selection Sort. By using minHeap, we can find the minimum element quickly.

This algorithm, however, needs an extra space and it also needs some extra time to copy the elements.

In reality, the algorithm uses the maxHeap and then adjust this heap

1 | void HeapSort(ElementType A[], int N) { |

Theorem: The average time consumed for heap sort is:

Remark: Although this sorting method looks more efficient, it may not perform better than Shell sorting using Sedgewick increment sequence.

Merge Sort

The core concept is merging 2 sub sequence.

Divide and Conquer

1 | void MSort(ElementType A[], ElementType TmpA[], int L, int RightEnd) { |

Non-recursive algorithm

1 | void MergePass(ElementType A[], ElementType TmpA[], int N, int length) { |